See also my more recent blog post http://coredataintegration.isys.bris.ac.uk/2013/06/16/important-documentation-for-soa-the-interface-catalogue-and-data-dictionary/

Part of Enterprise Architecture activity involves examining the “As Is” in terms of the organisation’s systems architecture and developing a vision and specification of the “To Be” systems architecture. Many systems are integrated with each other in terms of data – data is ideally stored once and reused many times. In an HE context this could involve reusing student records information in a Virtual Learning Environment or surfacing research publications stored in a research information system on the University’s public website, and so on. So, the enterprise integration architecture is a key puzzle to unravel and to improve over time.

In your organization you may be lucky enough to have had a central, searchable database in which all systems interfaces have been documented to a useful level of detail since the beginning of time. If so, I envy you, because at the University of Bristol we are not so fortunate – yet. An important part of my exploration of the “As Is” for my organization this last year has been to attempt to map out our complex systems architecture and to understand how mature our integration architecture is. Do we need to design and develop a Service Orientated Architecture, and if so are we ‘ready’ for it in terms of the maturity of our existing integration architecture and our clarity regarding future requirements? The problem has been a lack of documentation to date: many times when integrations between systems have been created they are done so according to the particular developer’s preference at that time, and not documented either in terms of how the integration was implemented (perhaps via ETL, using AJAX, using a Web Service, or merely via some perl scripts) or with respect to the rational for that method of integration (for a brief, useful analysis of options for integration that I believe is still relevant several years on, please see MIT’s chart at http://tinyurl.com/MITIntegrationOptions). Information about these integrations remains in people’s heads. And often these people leave (well, actually, a lot of people stay as the University is a popular place to work!, but you see my point).

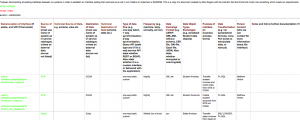

At our University there are a lot of point to point integrations between systems. Far more than we would like. And so, to understand the extent of the problem I have introduced an Interface Catalog which developers across teams are now undertaking to fill with information. There were some exchanges of information about using interface catalogs on the ITANA email list earlier this year. I used this and other resources to develop a proforma format for our University of Bristol interface catalog. I started this off in an internal wiki as I’ve found this a good forum for relatively informal, collaborative development of standards in the first instance. My idea was not to impose a format, but to build consensus around what we really need to record and how consistent developers need to be in constructing the information in each record. In the early days it looked something like this (this doesn’t show the full set of column headings):

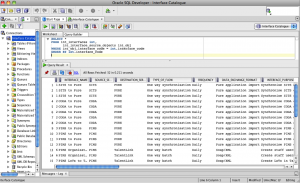

After several iterations and use by developers, consensus around the terminology we wished to use and the level of detail required was reached. The team has now implemented the catalog in an Oracle database, mainly so that we can easily control vocabulary (avoiding different developers describing the same type of interface or class of data object etc in different ways) and also so that we can more easily search the catalog.

There is a good level of buy-in to using this catalog which I am very pleased with. It is time-consuming to fill in information retrospectively, but developers report that it is quick and easy to record information about interfaces as they go along.

Some were initially unsure about the value of the catalog as it is clear that the database will run to many hundreds of rows, if not thousands, pretty quickly. However this catalog is not for browsing, it is a dataset for analysis and querying and there are several expected benefits that we hope to reap when we reach a critical mass of data:

- When a system is up for replacement we will be able to query the catalog to see how many interfaces there are to that system and thus assess the work involved in integrating similarly (or indeed in new ways) with the incoming system.

- When developers leave, they won’t have taken essential knowledge with them in their heads – centrally-held documentation is key!

- The make up of our current integration architecture will become clearer and we will be able to produce a coherent analysis of the extent to which we are depending on point-to-point integrations between systems (which are hard to sustain over time and reduce the agility of our overall architecture), and also where different integrations are repeating similar tasks in different ways (for example, transferring/transforming the same data objects). In the former case we are able to make the case more clearly for a future, more mature integration architecture, and in the latter case we can look to offer core API’s offering commonly required functionality in a standard way (the reuse advantage).

- The catalog will help us to introduce a more formal, standardised and thus consistent approach to the way we integrate new technical systems – if we choose ETL, say, then the developer should record the justification for that solution, if ESB was discounted, we can record why, and so on.

In the interests of standardisation, we are linking the names used to describe systems to our corporate services catalog and we are starting to join and interlink a Data Dictionary with the interface catalog (i.e. to help specify more precisely the data objects that are transferred and transformed between systems for every integration developed).

I am able to view and search the database using SQL Developer as my client software, and a simple SQL query reveals data like this:

If anyone wishes to know more about the full set of detail we’re capturing per interface, feel free to get in touch. I would also be interested in others’ experiences with interface catalogs.

I’d be interested in more detail about your interface catalog.

Hi Mark,

Update June 2013: For the latest schema please see http://coredataintegration.isys.bris.ac.uk/2013/06/16/important-documentation-for-soa-the-interface-catalogue-and-data-dictionary/

The ‘column headings’ for our interface catalog are currently:

INTERFACE_NAME (e.g. “SITS to Pure”)

SOURCE_SERVICE_CODE (e.g. SITS – name of system from which data extracted)

DESTINATION_SERVICE_CODE (e.g. Pure – destination system name)

TYPE_OF_FLOW (controlled vocabulary e.g. “One way synchronisation”)

FREQUENCY (e.g. daily, On Demand, every 5 minutes)

DATA_EXCHANGE_FORMAT (e.g. SOAP/XML, HTML/RSS)

INTERFACE_PURPOSE (textual summary of the business process this interface fulfills eg. “Transfer job vacancies to TalentLink”)

DATA_TRANSFORMATION (if data objects are not transformed via the interface then this records “none”, otherwise we will record something like “XSLT” or “Java and PL/SQL” to record the technology used to transform the data)

TECHNICAL_CONTACT (name of techy person deemed responsible for maintaining this interface)

BUSINESS_CONTACT (name of non techy person within the organisation who ‘owns’ this interface)

NOTES (in case there’s anything important to report about this interface)

LINK (url of REST webservice if that exists for e.g. or link to further information about this interface held online)

ADDED_DATE (date interface catalog record entered)

ADDED_BY (user id of person who entered the interface catalog record)

AMENDED_BY

INTERFACE_CODE_1 (we use this to group several rows that might pertain to one interface – because some interfaces perform several distinct business processes in one go)

SOURCE_OBJECT_CODE (code for data object type transferred by the interface e.g. STUDENT_ENROLMENTS, RESEARCH_PROJECTS)

Hope that helps,

Nikki

Hi Nikki,

this looks good as we are working on something similar, but from would like to automate the process of collecting data from SITS helps and our Finance system. Have you got any experience with keeping this up-to-date and how to you engage your data owners to do the job.

Thanks,

Ivonne

Hi Ivonne. Keeping the interface catalogue up to date is really important. We have added a requirement to our IT system ‘go live’ checklist for the interface catalogue to be updated for any system upgrade or new/changed system implementation. i.e. we have embedded maintenance of the interface catalogue in our existing change management processes meaning that we catch a lot of data integration changes this way and enforce documentation of them. I have also had to do lots of communications with all technical team managers who then reinforce the message with their technical teams regularly – we have placed responsibility of maintaining a record in the interface catalogue on the technical contact for the destination system involved in any integration.

With regard to master data governance more generally we have created a governance structure that runs from lower level Data Stewards up to more senior Data Managers, and includes a senior-level Master Data Governance board that meets quarterly. We are currently appointing to and training people in these roles and will launch Master Data Governance formally via a series of workshops in December this year. The mission is to get the importance of master data governance recognised at very high level in the University. I plan to blog how we’re doing this at some point (also see http://enterprisearchitect.blogs.bristol.ac.uk/2014/04/01/spaghetti-grows-in-system-architectures-not-an-april-fools-day-joke/). To assist with launching this initiative we used a Master Data Consultant to advise us and we have a Change Manager assisting on the project to help cope with the cultural change and process change aspects.

Hope that helps,

Nikki